Deep learning can be distinguished from machine learning in general because it learns a hierarchy of structures from the training data. Although other deep techniques exist, the phrase, deep learning is used almost exclusively to describe deep neural networks.

Deep learning and image recognition

A common application of deep learning is the recognition of objects in images. The input training data is a set of images, each of which consists of many thousands of pixels. Each pixel of each image is represented by a real number between zero and one. Zero indicates black and one indicates white (assuming, for the sake of simplicity, that the images are greyscale rather than color). The target outcomes are a set of labels such as “cat”, “chair” or “car”.

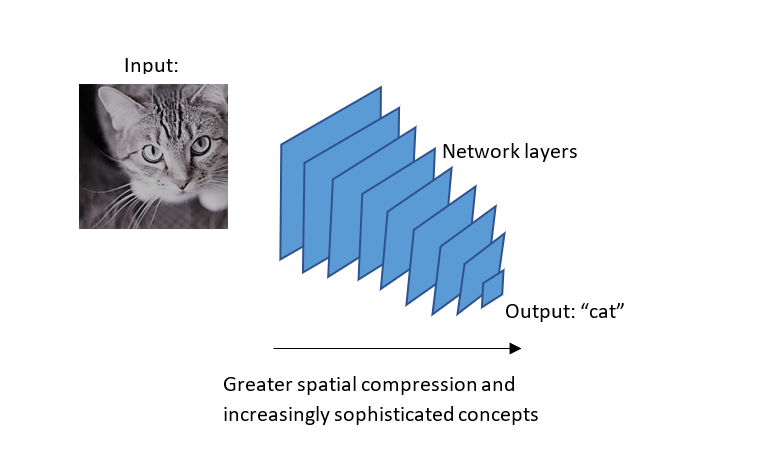

A deep neural network consists of many layers of neurons. During training, the shallower layers (closer to the input data) learn to identify simple concepts such as edges, corners or circles. Progressing deeper into the network, each successive layer learns increasingly complex concepts, such as eyes or wheels. The final layer learns the target labels.

Through this process, information is distilled and compressed, starting with the raw pixel values and ending with a general concept. This is illustrated above.

As well as images, deep learning can be applied to video, natural language processing (i.e., text) and audio (e.g., speech) data.

Recent growth

Recently, the popularity and application of deep learning has increased significantly. This can be attributed to three main technological factors:

- The availability of large data sets. The internet has created and enabled the sharing of vast amounts of data from sources like social media, the internet-of-things, and e-commerce. Furthermore, specialist domains such as astronomy, healthcare and finance have their own electronic data, which has been made more accessible by advances in databases and storage.

- Increasing computation power. The general increase in processing power associated with Moore’s law has benefited all computing. More specifically, deep learning is based upon linear algebra and uses ASIC and GPU chips specifically designed for parallel processing.

- Advances in learning algorithms. Convolutional and recurrent neural networks are two of the main techniques to utilize deep architectures. New algorithms for gradient descent have improved performance. Overfitting can be mitigated by regularization and dropout.

Want to know more? Check out the rest of our “What is…” guides, or learn more about machine learning!