When it comes to segmenting your data, it’s not uncommon to deal with missing values. It might be because certain questions have not been asked in a survey or because of ‘don’t know’ responses that have come through in your data. Most of the widely used cluster analysis algorithms can be highly misleading or can simply fail when most or all the observations have some missing values.

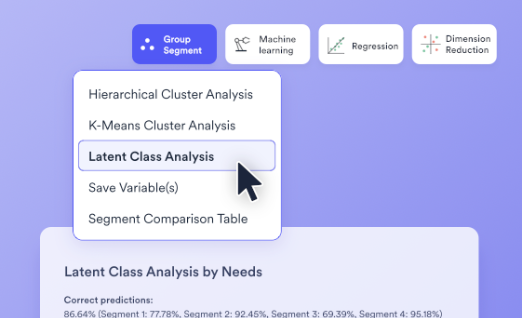

With very few exceptions, most of the cluster analysis techniques designed explicitly to deal with missing data are called latent class analysis rather than cluster analysis.

Latent class analysis and missing values

Before exploring the different approaches to dealing with missing values, it’s important to first define latent class analysis. Latent class analysis – like cluster analysis – is a technique used to form segments from data. The main difference between latent class analysis and other clustering techniques is its robustness. Unlike other algorithms, latent class analysis can create segments using a combination of categorical and numeric data, can accommodate complex sampling, and can also accommodate weights.

It also deals with missing data in a more effective way. By default, most cluster analyses methods make an assumption that data is missing completely at random (MCAR), which is both a strong assumption and one that is rarely correct. Latent class analysis makes the more relaxed assumption that data is missing at random (MAR), which is better.

For instance, in a customer survey, latent class analysis could identify customer segments based on purchase behavior, even if some customers didn’t answer all spending-related questions. It does this by modeling the likelihood that the missing responses are related to specific customer groups, rather than assuming the missing data is entirely random.

Cluster analysis techniques designed for missing data

The different approaches have been ordered in terms of how safe they are. The safest techniques are introduced first.

Impute missing values

Imputation refers to tools for predicting the values that would have existed were the data not missing. Provided that you use a sensible approach to imputing missing values (and replacing missing values with the average of their other values is not a sensible approach), running cluster or latent class analysis on the imputed data set means that the missing data is treated in a better way than occurs by default when using cluster analysis.

Use techniques developed for categorical data

Cluster and latent class techniques have been developed for modeling categorical data. When the data contains missing values, if the variables are treated as categorical and the missing values are added to the data as another category, then these cluster analysis techniques developed for categorical data can be used.

At a theoretical level, the benefit of this approach is that it makes the fewest assumptions about missing data. However, the cost of this assumption is that often the resulting clusters are largely driven by differences in missing values patterns, which is rarely desirable.

Form clusters based on complete cases, and then allocate partial cases to segments

A popular approach to clustering with missing values is to cluster only observations with complete cases, and then assign the observations with incomplete data to the most similar segment based on the data available. For example, this approach is used in SPSS with the setting of Options > Missing Values > Exclude case pairwise.

A practical problem with this approach is that if the observations with missing values are different in important ways from those with no missing values, this is not going to be discovered. That is, this method assumes that all the key differences of interest are evident in the data where there are no missing values.

Form clusters based only on complete cases

The last approach is to ignore the data that has missing values, and perform the analysis only on observations with complete data. This does not work at all if you have missing values for all cases. Where the sample size with complete data is small, the technique is inherently unreliable. Where the sample size gets larger, the approach is still biased except where the people with missing data are identical to the observations with complete data, except for the “missingness” of the data. That is, this approach involves making the strong MCAR assumption.