You want to give your survey respondents the opportunity to answer open-ended questions or elaborate on their responses. But how do you analyze the free-form text data from your survey? I’ll show you three different methods and explain when you might want to use each.

What is Survey Text Analysis?

Customer feedback surveys often allow respondents to answer questions in their own words. For example, a question may ask “What is the first brand that comes to mind when you think of insurance?” or “What don’t you like about Tom Cruise?”. The data generated from these questions is known variously as text data, free-form text data, verbatims, and open-ended data. There are three main ways of conducting survey text analysis: coding, text analytics, and word clouds.

How do you analyze survey responses?

1. Coding

The traditional approach to analyzing text data is to code the data. Coding works as follows:

- One or two people read through some of the data (e.g., 200 randomly selected responses), and use their judgment to identify some main categories. For example, for the question asked about attitudes to Tom Cruise, the categories may be: 1. Like him; 2. Hate him; 3. Don’t know who he is; and 4. Other. The list of categories and their associated codes is known as a code frame.

- Then someone reads all the data text and manually assigns a value or values to each response. The assigned value reflects the code created in the previous stage. If the person said, “I really love Tom!”, the code assigned would be 1. Depending on the data, each response will be assigned either one value (single response), or multiple values (multiple response). In the case of the question “What don’t you like about Tom Cruise?” it would be appropriate to permit multiple responses.

- Variables created in the previous step are then analyzed (e.g., using frequency tables or crosstabs).

Ready to analyze your text?

Start a free trial of Displayr.

2. AI Text analytics

All else being equal, text analytics is less informative than coding, as humans are better at correctly interpreting meaning in text than algorithms. For example, it is hard to train a computer to correctly analyze “I love Coke. Not!” or “Coke is wicked.”

However, advances in AI have rapidly closed the gap between humans and computers when it comes to analyzing text, meaning humans can now largely automate text analytics and supervise to ensure accuracy.

3. Word clouds

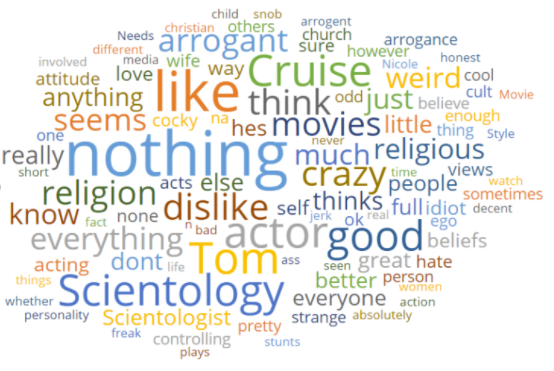

A word cloud is a visualization that shows all the words in text packaged closely together. The font size indicates the frequency with which words appear, with less interesting words (e.g., “the”) automatically excluded. Below is a word cloud of answers to “What don’t you like about Tom Cruise?” This is the most simplistic approach to analyzing text data, but it is also the cheapest and fastest.

Learn how to analyze open-ended survey responses in Displayr

You can adjust this word cloud to take out words that are not useful, like ‘Tom’ or ‘Cruise’. You can also tidy your word cloud using text analytics or easily show sentiment in word clouds.

Analyzing Open-Enders: The Once Great Trade-Off

Once upon a time, open-ended survey data provided a great trade-off for market researchers. This text data is incredibly effective at capturing the emotions and motivations behind the answers respondents give – ultimately digging into the ‘why’ behind consumer behavior in a way that no other method can. However, manually reviewing these responses and categorizing the answers into themes is time-consuming, expensive, and lacks the accuracy and scalability that quantitative methods bring.

AI-powered text analysis combines the depth of qualitative research with the accuracy of quantitative approaches. Researchers can now easily analyze open-ended responses at scale and with accuracy.

Try Displayr’s text analysis software for free today.