Text data can be an unwieldy beast. Whether you're analyzing tweets, reviews, or open-ended responses from a survey, you will usually need to do some cleaning and processing of the text before you can conduct your analysis.

Why is this the case? When you analyze text, you are counting the uses of individual words or phrases, and checking to see how the presence of your words is correlated with the outcomes you are interested in understanding. As the amount of text in your data set grows, so does the number of different words used. When you consider that people will also invariably make spelling errors, the number of "words" grows even further.

The goal of cleaning and processing the text is to reduce the number of unique words that you are including in your analysis to a manageable, meaningful collection.

Common Text Analysis Steps

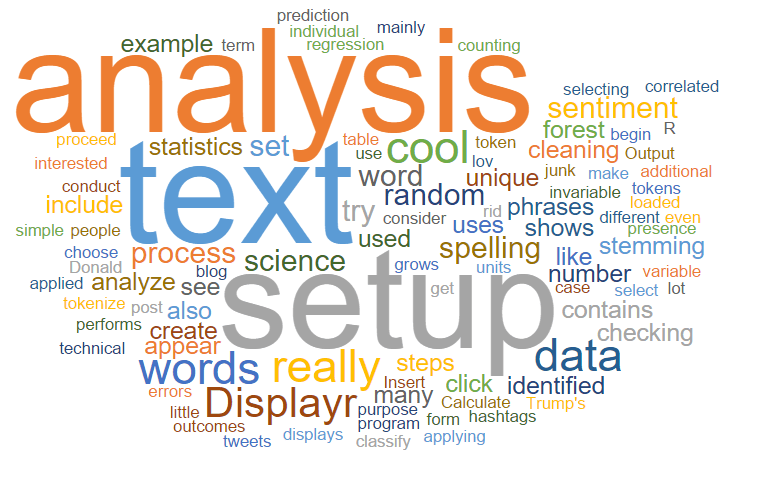

Once you have your data set loaded into Displayr, you can begin the setup and cleaning process by selecting Insert > More > Text Analysis > Setup Text Analysis. This will create an R Output whose purpose is to use R to process your text. Here, you can select the text variable that contains your text, and you can then proceed to choose which cleaning steps you would like to conduct. The program performs the text cleaning and displays a table that shows the unique tokens and how many times each was identified in your text. The term token is the technical term for the words or phrases that form the units of analysis.

This example shows the words that have been identified in a set of Donald Trump's tweets (see Sentiment analysis is simple for more on this data set). We have applied very little cleaning, and so there is a lot of junk in here - mainly from hashtags and links in the text. By applying some additional cleaning steps, we can get rid of the junk and reduce the list of 638 unique words to a smaller, more meaningful set. Click here to try this out for yourself in Displayr.

Ready to analyze your text?

Start a free trial of Displayr.

The different kinds of processing steps are described as follows.

Stopword removal

A stopword is a common English word that does not convey much meaning by itself (e.g., the, of, and a). Removing stopwords is so common to text analysis that it is done automatically: you won't see any of these words in the table above.

Correct spelling

As the name suggests, this process involves replacing incorrectly spelled words with the correct word.

In Displayr, this is done by checking the Correct spelling option. The algorithm uses an English-language dictionary to check the words. It also tries to account for words that are incorrectly spelled but which occur very frequently in the text (to avoid trying to correct things like brand names which probably don't appear in the dictionary).

Perform stemming

The process of stemming is when you try to remove plurals, tenses, and other suffixes from the ends of words to work out what the root word or stem is. For example, the words lover, loved, and loving all have the same stem, which is lov.

Displayr's stemming heuristics will replace words of the same stem with the most common word in your text belonging to that stem.

Replace words/synonyms

Replacing words with synonyms is another standard processing step. For example, in a study of the airline market, you may want to replace all appearances of baggage with luggage.

In Displayr, this is done in the Replace words field using the following syntax: <current word>:<replacement word>. You can include additional replacements by separating them by commas. So to replace the word baggage with the word luggage use baggage:luggage. Separate multiple instances with commas.

Remove these words

In some cases your sample will contain frequent words which you don't want to keep. In Displayr, just type them in here, separated by commas. This adds them to the standard list of stopwords.

A useful way to work in Displayr is to use Insert > More > Text Analysis > Search at the same time as performing the setup of the text analysis. This is because when you create the setup, you are shown the most frequent words. But, to understand what to do with them (e.g., whether to merge them with other words), you need to know the context in which they appear, which is easily done using the text analysis search feature.

Phrases

By default, tokens are usually single words. However, it is also possible to explicitly list phrases as tokens.

In Displayr, this is done by entering them in the Phrases field. In most cases the output of this cleaning will be a collection of single words. However, it can be useful to replace if you want to treat word pairs together, i.e. as phrases, then type them in here, separated by commas.

Minimum word frequency

This sets the bar for how often a word must appear in the text before it will be included in your analysis. This is the most powerful tool in terms of reducing the sheer number of words to include. If you have too many words then just raise the bar.

Cleaning and setup is an iterative process. The table shows the current set of words kept, and you can then make decisions about which of these to keep, remove, or modify. Simply change the inputs as desired. Here is how the table looks after applying additional cleaning:

What analyses can be done?

Where do we go from here? The setup item that we used above contains all the information about the words that we want to analyze. It functions as an input to the next analyses which include:

- Predictive trees. Use the words in your text to predict the values of your most important variables, like Twitter Likes, customer satisfaction, or Net Promoter Score. Insert > More > Text Analysis > Techniques > Predictive Tree. See Text analysis: predicting engagement from tweets for more on these.

- Sentiment Analysis. Count up how positive or negative the language in your text is, and correlate this with other variables. Insert > More > Text Analysis > Techniques > Save Sentiment Scores. See Sentiment analysis is simple for more on how sentiment analysis works.

- Term Document Matrix. Create a table (or matrix) of data which translates the words in your text into a language that is more friendly to statistical algorithms. This makes your text cleaning available for any R code you would like to write. See Text analysis: Hooking up your term document matrix to custom R code for more.

Text Analysis in 2025

The process outlined above gives a great overview of the theoretical side of text analysis. Steps like stemming, stopword removal and minimum word frequency still play an essential role in allowing researchers to generate insights from text.

The biggest change since when this step-by-step guide was originally created has been advances in artificial technology. AI text analysis has automated the majority of these steps. With these improvements to AI, sentiment analysis, predictive trees and so many more text analytics techniques can be performed in a matter of seconds, allowing you to analyze more text, faster and with greater accuracy.

Start a free trial of Displayr and try for yourself today.