regression.

A well-known problem with linear regression, binary logit, ordered logit, and other GLMs, is that a small number of rogue observations can cause the results…

Continue reading

In this post I describe how to quickly create a quad map in Displayr. The example uses a Shapley Regression to work out the relative…

Continue reading

Rather than just measuring one type of customer satisfaction, it's useful to measure these three aspects of customer satisfaction.

Continue reading

This post describes how to interpret the coefficients, also known as parameter estimates, from logistic regression (a.k.a. binary logit). Read more.

Continue reading

This is a practical guide to logistic regression. To get the most out of this post, I recommend you follow along with my instructions and…

Continue reading

Logistic regression (also known as binary logistic regression) is a predictive modeling technique used to predict outcomes involving 2 options. Learn more.

Continue reading

Logistic regression is a type of regression analysis used when the dependent variable is binary (i.e., has only two possible outcomes).

Continue reading

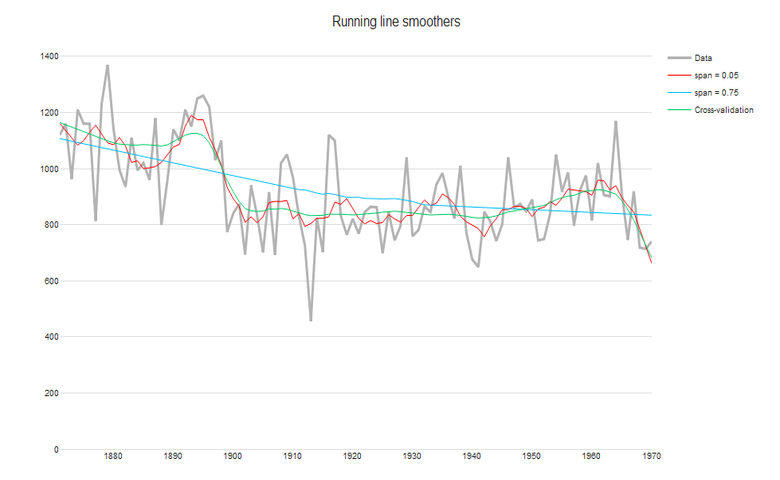

For time-series data, you'll want to separate long-term trends and seasonal changes from random fluctuations. Find out which time smoother to use.

Continue reading

The variance inflation factor (VIF) quantifies the extent of correlation between one predictor and the other predictors in a model.

Continue reading

Although PLS and Johnson's Relative Weights are both techniques for dealing with correlations between predictors, they give fundamentally different results.

Continue reading

Why is Multiple Linear Regression the standard technique taught for Key Driver Analysis when it gets it so wrong? The better method is Johnson’s Relative We

Continue reading

Partial Least Squares (PLS) is a popular method for relative importance analysis in fields where the data typically includes more predictors than observations. Relative importance analysis…

Continue reading

5 ways of presenting the results of key driver analysis techniques, such as Shapley Value, Kruskal Analysis, and Relative Weights.

Continue reading

Shapley regression has been gaining popularity in recent years and has been (re-)invented multiple times. However, relative weights, should be used instead.

Continue reading